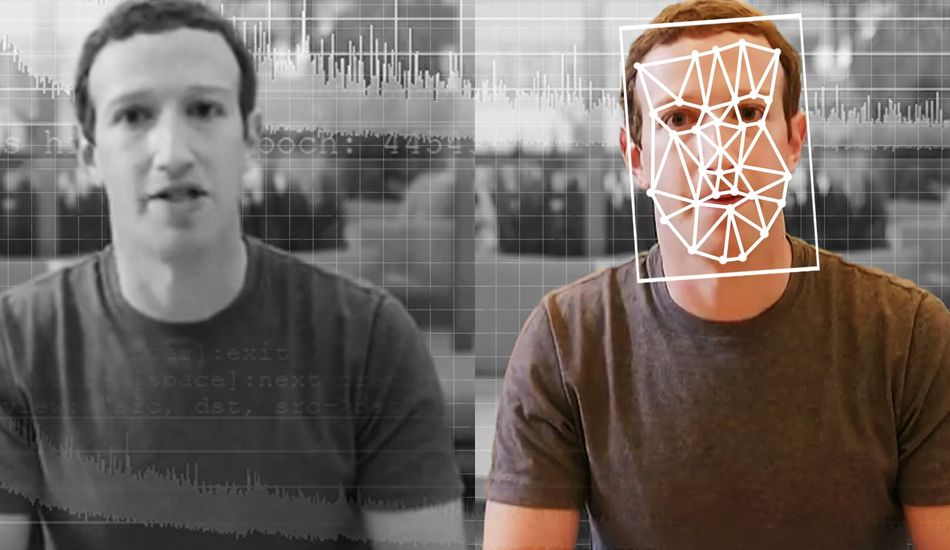

Deepfakes: How AI Fakes Became Reality and the Future Fight Against Them

Okay, so deepfakes. We all knew they were getting better, but 2025 was a whole new level of wild. I'm talking AI-generated faces, voices, the whole shebang, so realistic they're fooling almost everyone. And it's not just the quality; the sheer number of these things is exploding.

Think about it: we went from roughly 500,000 deepfakes online in 2023 to an estimated 8 million in 2025. That's insane! The tricky part is that, for everyday stuff like video calls or social media posts, these fakes are now good enough to trick even the most experienced people. It's getting almost impossible to tell what's real and what's not.

The Tech Behind the Trickery

As a computer scientist who researches this stuff, I can tell you a few things are driving this. First, video realism has jumped ahead thanks to new AI models. These models maintain temporal consistency, meaning the videos have smooth motion and consistent identities. No more weird flickering or distortions that used to give deepfakes away.

Second, voice cloning has hit what I'd call the "indistinguishable threshold." Now, just a few seconds of someone's voice is enough to create a convincing clone, complete with all the natural nuances. This is already causing problems, with retailers reporting thousands of AI-generated scam calls daily.

Consumer tools have lowered the technical barrier to almost zero. With upgrades from OpenAI and Google, anyone can describe an idea, have an AI write a script, and generate polished video in minutes. This means that the ability to create coherent, storyline-driven deepfakes at scale has been democratized.

Because of this combination of increasing quantity and personas that are nearly indistinguishable from real humans, detecting deepfakes is really difficult, especially in a world where people's attention is fragmented and content moves faster than it can be verified. Deepfakes have already caused harm in the real world, from misinformation to targeted harassment and financial scams, which spread before people realize what is happening.

What's Next? Prepare for Real-Time Deepfakes

Looking ahead, I see deepfakes moving toward real-time synthesis. We're talking about videos that closely mimic a human's appearance in real-time, making them harder to detect. The focus is shifting from visual realism to how the fake person behaves over time.

I fully expect to see entire video-call participants being synthesized in real-time. Imagine AI-driven actors whose faces, voices, and mannerisms adapt instantly to whatever you say. Or scammers using responsive avatars instead of fixed videos. It's a little terrifying, if you ask me.

As the technology improves, it'll become even harder to tell the difference between real and fake. Detecting deepfakes will depend on things like secure provenance (think digitally signed media) and AI content tools that verify authenticity. Simply looking harder at the pixels won't cut it anymore. The game has changed.

1 Image of Deepfakes:

Source: Gizmodo